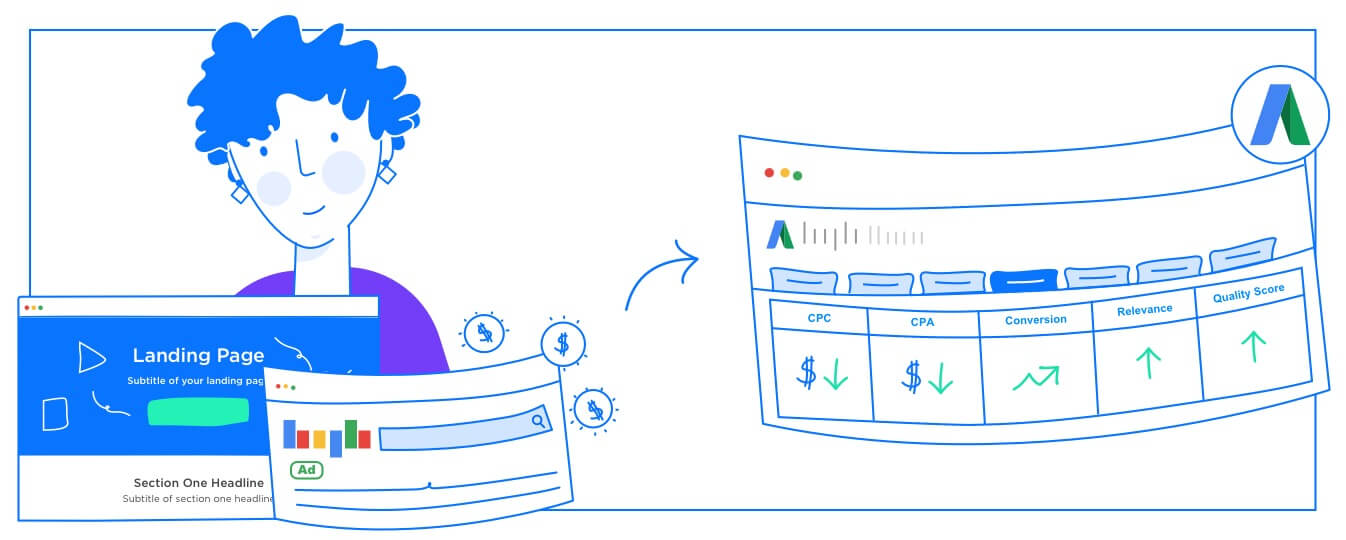

Ad trying out may be a time-ingesting part of the PPC optimization, so it’s a great area to deal with a Google Ads script.

In this post, I’m sharing a script that allows the tedious challenge of making quick facts about what historical advert texts have adequately worked and can be well worth deploying in other advert groups.

The script splits all commercials into their issue parts, like headlines and descriptions, and totals the metrics across the account.

If this script’s idea sounds familiar, I’ve shared other iterations within the beyond; however, this one is exceptional.

I first created this script for Optmyzr’s scripts library and later shared a version called the Ad Component Report on GitHub.

So what’s distinct now, and why is this trendy model worth a glance?

Well, I made a big trade to the code that addresses a problem I’ve started listening to more about recently…

Why A/B Ad Testing Is Flawed

The problem with an advert trying out is that advertisers may believe it’s within the advert that influences overall performance.

But as Martin Roettgerding lately mentioned, an A/B advert won’t be dependable because the difference in textual content among advert variations is merely one of the many variable factors of an experiment.

The different component changing is the auctions in which the ad is being proven. In private sales, the users are individual, the time of day is distinct, the tool is one-of-a-kind, and so on.

In truth, there are so many variables in play that it’s no longer fair to point at advert textual content differences because of the specific motive why A/B ad exams produce winners and losers.

Mike Rhodes, the founder of AgencySavvy, recently informed me of a shocking advert he ran. Rather than doing an A/B check with variations of an ad, he ran an A/An inspection in which two advertisements contained precisely the same text.

Logic would dictate that there ought to have been no winner and loser on this test, but he discovered that ad A received an advert A lost.

The exact identical ad each gained and lost the experiment!

There couldn’t be an additional clear instance to highlight that there’s more than directly the ad textual content that influences how assessments move.

Segment Ad Data for Proper Experiments

The manner to solve this trouble is to cast off as many of the variables as possible when studying advert overall performance statistics.

You take away much of the variety associated with query variations by analyzing advertisements from one ad group or even one exact fit keyword at a time.

By including segments for devices, days of the week, and ad slots, you can get down to specific eventualities where you’ve got as near as viable to an apples-to-apples comparison of the overall performance of different advert texts.

When exceptional advertisements are shown in scenarios that intently resemble one another, you can begin to factor in the posters as the primary driver of differences in overall performance.

Data Sparsity When Segmenting Ads Data

The more we section the facts, the more granular the statistics become, and the harder it is to discover a statistically sizable winner.

You can strive for one of the many statistical significance calculators to be had online (like this one) to look at how a lower metric makes it tougher to find a significant winner.

Trading Off Volume & Similarity of Data

So, we ought to make tradeoffs and determine how to balance having sufficient statistics with having it come from comparable auctions. This is where the script is available.

Previous iterations of it did not note segments. While it would have plenty of data for metrics, those facts came from every viable situation in which the advert ran.

In other phrases, I preferred masses of information over getting information from comparable scenarios within the alternate-off I described.

You could get more statistics using this script using many campaigns while limiting the records to a chosen phase. Now, with the output of the text, it’s possible to run a few interesting comparisons.

How to Use the Segmented Ad Component Report

Here’s an example of a way to use the regenerated by the scripts.

Say that you locate there may be a huge difference in ad headline performance on mobile and desktop devices. That can be a sign that it may be worth trying to break up campaigns so you can run amazing ones for distinctive devices.

You ought to use device bid modifications, but that doesn’t have the funds to have a great deal of flexibility in various messages you display to particular users, depending on their tool.

When searching for variations in performance, attention is primarily on ratio metrics like CTR and conversion fee.

There may be considerable variations in effect ranges; however, that can be because of the reality that an extraordinary extent of searches shows up on exceptional gadgets or that Google is already doing an excellent activity displaying the right advertisements relying on the consumer’s tool.

What matters more is that the ratio of clicks over impressions (CTR) is as high as viable, regardless of the tool.

Again, Google might agree to a perfect activity showing the advert version with a higher chance of getting a good CTR on every tool. Still, if you discover that there are winners for unique gadgets, it can be time to mix things up with segmented campaigns.

The Script

This script has a handful of configurations:

Current setting.SpreadsheetUrl

Use this to enter the URL of the Google Sheet that must obtain the facts. Or add the textual content “NEW” to generate a new spreadsheet robotically whenever the script runs.

CurrentSetting.Time

Enter the date range for the statistics, e.g., “LAST_30_DAYS”, “LAST_MONTH,” “20180101,20181231.”Current setting.AccountManagers

A comma-separated listing of Google usernames of all the folks who must have permission to work with the document in Google Sheets.

CurrentSetting.EmailAddresses

A comma-separated listing of e-mail addresses must get an e-mail when a brand new report is ready.

CurrentSetting.CampaignNameIncludesIgnoreCase

Enter a string of text that has to be the gift in the marketing campaign names whose facts need to be covered in the record.

CurrentSetting.Section

This new place tells the script what extra facts columns to add for special segments.

Choose from any section this is to be had for the AD_PERFORMANCE_REPORT from the Google Ads API, e.g.,” Device,” “DayOfWeek,” or “Slot.”